Perhaps the most exciting part of the digitalization of healthcare is not digitalization per se. Rather, it is bridging the physical world of people and objects with the virtual world of digital information. A prime example of this are ‘digital twins’: virtual models of systems that are updated dynamically by being connected to their physical counterparts, using a diverse set of sensors. Digital twins will enable us to analyze systems remotely in real time, prevent problems before they occur, and test new products in virtual environments before building them. As outlined in my previous post, we have already taken the first exciting steps in this direction. So what about the following, fascinating, question: If a digital twin of an MRI scanner can help you predict when a physical part needs replacement, and guide repair, could we apply the same concept to discover and treat ailments in the human body before they become apparent? The basic idea of such a “digital patient” is the same: if you integrate different measurements of a person over time, you can build a digital model of a body part such as an organ, and eventually an integrated model of their anatomy and physiology, so you can better understand how these function. The ultimate vision is to have a lifelong, personalized model of a patient that is updated with each measurement, scan or exam, and that includes behavioral and genetic data as well. As one surgeon said, “I see the digital patient as an opportunity to bring together all the information on a particular patient.” This could support diagnosis and treatment planning, and better targeted therapy delivery, or lifestyle interventions. We are a long way off from having a full digital patient. But there is one part of the body where digital twin technology already has demonstrated promising applications, and that is the heart: the pump that fuels life.

Every heart is unique – and why that poses a challenge to clinicians

Your heart is as vital to your health as it is vulnerable to disease. Cardiovascular diseases (CVDs) take the lives of 17.7 million people every year – almost one third of all deaths worldwide. Early detection and prediction of the progression of CVD are essential for saving lives through improved treatment. However, despite significant advances in medical imaging techniques like MRI, CT and ultrasound, determining optimal treatment plans for patients with CVD remains challenging. Medical images provide a wealth of information, but it is difficult for a clinician to reconstruct and interpret the anatomy of a patient’s heart from a set of 2D images. Yet this is a crucial step in understanding the nature of a CVD and in planning and guiding interventions. Adding to the complexity is that every heart is different. For example, your heart chambers may be shaped slightly differently than mine. This limits the usefulness of general, fixed anatomical models that are based on average population data. How to get a good understanding of a patient’s heart? Think digital twin. Unlike traditional anatomical models, which describe organs in a general way, a digital twin reflects the particular – it captures the idiosyncrasies that make your heart unique.

General anatomical models based on population data do not capture the unique characteristics of your heart. A digital twin does.

A full digital twin of the heart does not exist yet today. But the beginning is there, and we are working hard to make this concept a reality.

A look into the heart

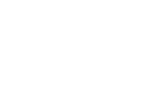

In 2015 we launched Philips HeartModel – a clinical application that allows cardiologists to assess several cardiac functions that are relevant to diagnosis and treatment of patients with CVD. It automatically generates 3D views of the left heart chambers of a patient, based on a set of 2D ultrasound images. HeartModel also calculates how well the heart is pumping out blood, which is an important indicator of possible heart failure.[v] How does it work? HeartModel is not an empty digital shell when it is fed with images of your heart. It has prior knowledge about the general structural layout of the heart, how the heart location varies within an image, and the ways in which the heart shape varies. We incorporated this knowledge into the model by training it on approximately one thousand ultrasound images from a wide variety of heart shapes and sizes (the learning phase in the image below). Based on the unique images of your heart, HeartModel adapts the generic model into a personalized one (the personalization phase in the image below).

How HeartModel creates a personalized model of your heart

The strength of this approach is that it combines scientifically proven knowledge of the anatomy of the heart with advanced data analytics. Conceptually, this is similar to how a digital twin of a device works. As you may recall from my previous post, a digital twin of a device also relies on prior knowledge of the device, combined with data analytics and modelling techniques.

The strength of this approach is that it combines scientifically proven knowledge of the anatomy of the heart with advanced data analytics.

It is the importance of human domain knowledge that often gets overlooked as data-driven methods become ever more prevalent and sophisticated. We could not have developed HeartModel without the close involvement of cardiologists. You need to understand the way they work. The way they think. The way they look at an image. Digital twin technology, like any other technology, should adapt to the needs and workflows of clinicians, helping them to better serve patients. (Which is why, in general, we like to speak of adaptive intelligence.) This is what I find so exciting about the digital twin paradigm: it brings together various kinds of experts – from clinicians to researchers and data scientists – and different knowledge domains – from physics, biology, and medical science to computer science, data science, image processing, and machine learning. True progress happens through interdisciplinary collaboration.

When every millimeter matters

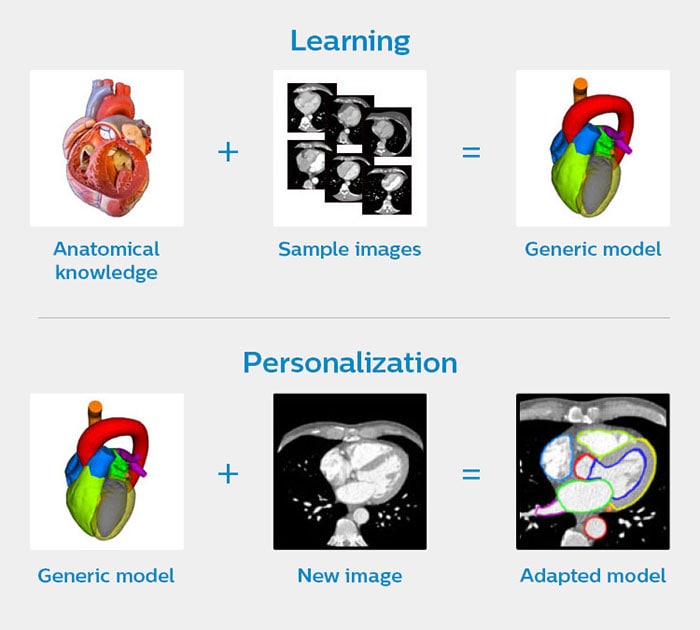

Another application of personalized models of the heart is image-guided therapy, where medical images are automatically integrated to guide surgical procedures. Let’s say you are a surgeon or an interventional cardiologist tasked with replacing a damaged valve in a patient’s heart. You are navigating through the patient’s arteries with a catheter that contains a replacement valve. Imagine the precision required. If you do not position the valve at exactly the right angle, it will be dislodged by the strong force of the blood that is pumped around, or it will fail to function correctly, putting the patient at risk. This type of minimally invasive procedure, known as transcatheter aortic valve implantation (TAVI), is gaining popularity because the chest doesn’t have to be opened – the catheter can be inserted via a small opening in the body. But the procedure demands accurate navigation. Every millimeter matters. A tool like Philips HeartNavigator combines CT images captured before the procedure into a single image of a patient’s heart anatomy with an overlay of live X-ray information during surgery. Beforehand, the tool simplifies procedure planning, helping the surgeon to select the right device. During surgery, it provides real-time 3D insight to position the device. The virtual becomes a guide for the physical, enhancing the skills of the surgeon.

Philips HeartNavigator combines CT images of a patient’s heart anatomy captured before the procedure into a single image with an overlay of live X-ray information during surgery

The heart in virtual and augmented reality

In the future, tools like HeartModel and HeartNavigator could be paired with virtual reality (VR) technology to enable lifelike simulations that help clinicians practice complex procedures. Medical education would also benefit from this, allowing students to practice on virtual patients. In fact, Stanford University is already using VR technology as a teaching tool, visualizing a general model of a beating heart in immersive 3D. Researchers are also developing patient-specific heart visualizations in VR, exploring their potential as a diagnostic instrument. With advances in 3D printing, patient-specific models could even be used to create customized prostheses and implants. Equally promising is the use of Augmented Reality (AR) in the operating room, with a patient-specific 3D model overlaid on the patient’s body. AR enables a surgeon to ‘see through’ the skin and understand the underlying anatomy before making an incision. It can also serve as a navigational aid during procedures, potentially improving their accuracy. AR applications have shown encouraging first results in, for example, lower limb surgery and spinal surgery , paving the way for similar applications in heart surgery.

From a virtual heart to a full digital patient

Looking further ahead: what if we enriched patient-specific models of the heart by integrating information not only at an anatomical level, but also at an electro-physiological, biomolecular, and genomic level? Would we be able to predict heart diseases before they manifest themselves, and better tailor treatment and prevention plans to the unique characteristics of an individual patient? In a similar vein, could we develop digital twins for other organs, and eventually for the whole human body, to usher in a new era of personalized medicine? Right now, these are still visions for the future. There are many challenges that stand in the way of developing a full digital patient. In my next article, we will explore some of those challenges and ways of addressing them – so we can continue to bridge the physical and the digital world, for better patient care and healthy living.

References

[1] Roadmap for the digital patient (2016). http://www.vph-institute.org/upload/discipulus-digital-patient-research-roadmap_5270f44c03856.pdf [2] WHO factsheet: World Heart Day (2017). http://www.who.int/cardiovascular_diseases/en/ [3] Weese, J., & Lorenz, C. (2016). Four challenges in medical image analysis from an industrial perspective. Medical Image Analysis, 33, 44-49. [4] Smith, N., De Vecchi, A., McCormick, M., Nordsletten, D., Camara, O., Frangi, A.F., ... Rezavi, R. (2011). euHeart: personalized and integrated cardiac care using patient-specific cardiovascular modelling. Interface Focus, 1, 349-364. [5] Ejection Fraction Heart Failure Measurement – American Heart Association (2018). http://www.heart.org/HEARTORG/Conditions/HeartFailure/DiagnosingHeartFailure/Ejection-Fraction-Heart-Failure-Measurement_UCM_306339_Article.jsp#.W0xaK6czaM8 [6] Virtual reality takes doctors on a ‘fantastic voyage’ inside hearts (2017). https://www.statnews.com/2017/04/13/virtual-reality-stanford/ [7] Bramlet, M., Wang, K., Clemons, A., Speidel, N.C., Lavalle, S.M., & Kesavadas, T. (2016). Virtual reality visualization of patient specific heart model. Journal of Cardiovascular Magnetic Resonance, 18(Suppl 1):T13. https://jcmr-online.biomedcentral.com/articles/10.1186/1532-429X-18-S1-T13 [8] Rengier, F., Mehndiratta, A., Tengg-Kobligk, H., Zechmann, C.M., Unterhin-Ninghofen, R., ... Giesel, F.L. (2010). 3D printing based on imaging data: review of medical applications. Int. J. Comput. Assist. Radiol. Surg., 5 (4), 335–341. [9] Pratt, P., Ives, M., Lawton, G., Simmons, J., Radev, J., ... & Amiras, D. (2018). Through the HoloLens™ looking glass: augmented reality for extremity reconstruction surgery using 3D vascular models with perforating vessels. European Radiology Experimental, 2. https://eurradiolexp.springeropen.com/articles/10.1186/s41747-017-0033-2 [10] Elmi-Terander, A., Skulason, H., Söderman, M., Racadio, J., Homan, R., ... Nachabe, R. (2016). Surgical navigation technology based on augmented reality and integrated 3D intraoperative imaging: a spine cadaveric feasibility and accuracy study. Spine, 41, 1303-1311. https://www.ncbi.nlm.nih.gov/pubmed/27513166

Share on social media

Topics

Author

Henk van Houten

Former Chief Technology Officer at Royal Philips from 2016 to 2022