From alarm fatigue to intelligent intervention: the promise of AI agents in acute care

Feb 27, 2026 | 3 minute read

In overstretched acute care settings, unnecessary interruptions are a constant drain. Could AI agents help prevent them and give valuable time back to care teams?

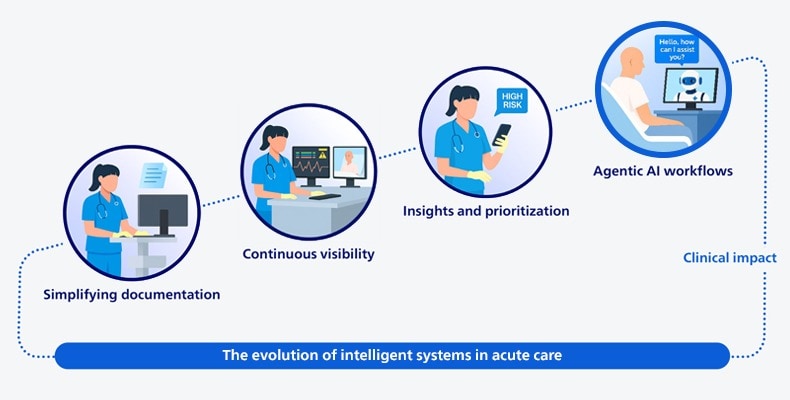

It’s 1:22 a.m. when Maria wakes to another alarm in her hospital bed. She’s 69, admitted with pneumonia, and finally asleep after a long day of treatments. The monitor shows her oxygen saturation has dropped. But when the nurse, Jayden, arrives, he spots an all-too-common issue: the pulse oximeter probe on Maria’s finger has slipped. Once adjusted, the reading returns to normal. Maria is now fully awake, her heart racing. Meanwhile, Jayden is already on his way to the next patient. Scenes like this play out constantly across hospital wards, where nurses respond to a high volume of non-actionable alarms. Studies indicate that only 5% to 13% of patient alarms in acute care settings require clinical action [1]. The toll is felt on both sides: patients lose rest and peace of mind, while care teams experience increasing alarm fatigue, putting them at risk of burnout [2]. Now imagine the same scenario, but with a twist. Before the alarm escalates or reaches Jayden, an AI agent analyzes multiple sources of device data – combined with camera-based monitoring in the room – to determine that Maria’s probe has slipped from her finger. After assessing that she is awake and able to respond, the agent delivers a gentle audio cue prompting her to adjust the probe. Maria’s oxygen reading immediately stabilizes. The incident, the intervention, and the resolution are all documented automatically, without the need for manual charting. And Jayden? He stays focused on another critically ill patient and is notified only if the reading fails to recover or if the assessment signals a higher-risk situation requiring escalation. Notice how, in this future scenario, technology does two things. It filters signal from noise, and it initiates an intelligent response. It’s this combination of reasoning and autonomous action that marks a fundamental shift in the evolution of patient care. We are moving from systems that simply inform towards systems that act – autonomously resolving routine issues, returning time to clinicians, and escalating only when needed. That, in a nutshell, is the promise of agentic AI, and its implications for the future of acute care are profound.

The evolution of intelligent systems in acute care: from systems that inform towards systems that act

A new partnership at the bedside

At first glance, the idea of adding AI agents to the care team can raise reasonable concerns. Will they place another layer of technology between providers and patients? Could they add even more alerts, clicks, and complexity? These are fair questions. Yet Jayden and Maria’s story suggests that a different future is possible – one where technology removes friction rather than creates it. When AI agents are designed to operate almost invisibly in the background, handling repetitive, low-risk tasks that consume time but add little clinical value, they free clinicians to focus on what matters most. An AI agent might automatically annotate and generate reports for ECG strips, or help assess discharge readiness based on real-time clinical data. In neither case does it replace the clinician. It simply helps reduce their cognitive load. That’s the north star we’re working toward. It’s also a practical necessity. Persistent staffing constraints in acute care mean the answer cannot be adding more people to meet rising patient volumes and acuity. Healthcare leaders I speak to are candid about the reality: “We can’t hire our way out of this,” they tell me, and they’re right. The solution is to rethink how work gets done. That means defining a new kind of partnership between human caregivers and digital ones. One in which AI agents handle routine, time-consuming tasks within clear guardrails, and in which clinicians focus on the kind of complex, relational work that requires human judgment and care.

Four early lessons from the field

We can only achieve this vision in close partnership with healthcare leaders and clinicians. Here are four things that are guiding our efforts, and what we’re learning along the way:

1. Start with clinical reality, not technological capability. Too many AI initiatives in healthcare – including agent-based systems – don’t make it beyond the lab because they are built around what the technology can do, rather than what clinicians actually need. AI agents will only succeed if they are grounded in real-world care delivery. That begins by having direct conversations with frontline teams. Where are they facing interruptions? What consumes their time without improving care? Which tasks are accumulating because roles remain unfilled? Those questions should shape the design of AI agents. That also means thinking carefully about how AI agents fit into the way clinicians work. They can’t be bolted on as an afterthought, but need to be woven into existing workflows, with a shared understanding of who is responsible for what.

2. Experiment broadly, scale deliberately. With agentic AI in healthcare, we are still in an early phase where broad experimentation makes sense. It’s what generates momentum, gets people engaged, and galvanizes the organization to explore new ways of working. But experimentation alone can only take you so far. At some point, you have to look honestly at what is making a difference in clinical practice, and what isn’t. That means making clear choices and saying “no” to some ideas so you can focus on scaling the ones that are working. This is where things tend to get organizationally complex. Scaling a successful use case, whether it’s within our own company or in the healthcare organizations we work with, often requires alignment across multiple disciplines – procurement, engineering, regulatory, clinical, and others. You need to agree on new ways of working together. That takes courage and leadership. It’s only through this kind of cross-functional alignment that we can turn promising pilots into something people actually rely on in their daily work.

3. Reshape management for hybrid human-agent teams. As we assign certain tasks to AI agents, management itself changes. In software engineering, you can already see this shift: engineers describe what they want, AI agents execute parts of the work, and engineers monitor the quality of the outcomes. I believe healthcare will move in a similar direction. We may soon see AI agents “sign out” tasks much like nurses do during shift change. For healthcare leaders, this means they will increasingly manage hybrid teams composed of both human caregivers and AI agents. And that will require new ways of thinking about accountability, oversight, and trust.

4. Build trust from the start. AI agents can only succeed at the bedside when trust is established, and that trust must be earned across the whole care environment. For patients, that starts with transparency. If video monitoring is active, it needs to be clearly indicated, with plain-language explanations of what the system does, what it doesn’t do, and how the patient’s data is used. Care teams play a critical role in that process. Our 2025 Future Health Index report shows that doctors and nurses rank among the most trusted sources when patients seek reassurance about the use of AI in their care. For clinicians, trust is built on reliability, explainability, and control. AI agents need to operate within defined boundaries, with clear audit trails and logic clinicians can actually follow. Care teams need to see what actions were taken and why, and they need the ability to override the system when their judgment calls for it. At the organizational level, governance ties it all together. Oversight structures, safeguards against performance drift, and strong security and privacy protections have to be built in from day one. Ultimately, trust is earned the same way it always has been in healthcare: through consistent, safe performance that demonstrably improves patient care.

From controlled pilots to clinical value

Will agentic AI radically change patient care at the bedside tomorrow? No. I think we need to be realistic. In decades of working in healthcare innovation, I’ve never seen technology advance this quickly. But organizational change rarely moves at the same pace. Clinical workflows, governance, and trust all take time to catch up. What’s clear is that pressures in acute care are not easing, and incremental fixes will not be enough. This is the moment for disciplined experimentation with AI agents. Start with low-risk workflows, identify concrete pain points, and define success metrics directly tied to those pain points – whether it’s reducing non-actionable alarms or reclaiming nurse time. Establish a clear governance framework with appropriate clinical sign-off where needed. That’s how organizations can move from controlled pilots to agentic innovation at scale. In the end, it all comes down to outcomes: fewer unnecessary interruptions for nurses like Jayden on a night shift, and more restorative recoveries for patients like Maria. To learn more about how Philips is helping customers deliver better care to more people with AI-enabled solutions, join us at HIMSS 2026.

References: [1] Ruppel H, Funk M, Whittemore R. Measurement of Physiological Monitor Alarm Accuracy and Clinical Relevance in Intensive Care Units. Am J Crit Care. 2018 Jan;27(1):11-21. https://pubmed.ncbi.nlm.nih.gov/29292271/ [2] Halley Ruppel, Maura Dougherty, Mahima Kodavati, Karen B. Lasater, The association between alarm burden and nurse burnout in U.S. hospitals, Nursing Outlook, 72 (6), 2024, https://www.sciencedirect.com/science/article/pii/S0029655424001817

Share this page with your network